research

Ongoing and completed research projects by MAPS Lab

Scaling Foundation Models for Enhanced Perception in Embodied Agents

Scaling large foundation models (e.g., CLIP, DINO and SAM) to local compute holds the promise of revolutionizing how embodied agents, such as robots, AR, and IoT devices, interact with and comprehend their environments. At the heart of this endeavor is the integration of advanced visual and visual-language understanding capabilities into these agents, enabling them to interpret and respond to the complex, dynamic world around them. Recent advancements in AI and machine learning have paved the way for such models to be adapted for use in real-world settings, where they can offer unparalleled context-awareness in a zero-shot way. However, deploying these foundation models in diverse and often unpredictable environments poses significant challenges. These include ensuring generalization across different sensors carried by the agents, optimizing for computational efficiency on limited hardware, and achieving seamless interaction with human users and other machines. In our research, we explore the full spectrum of these challenges, from adapting the models for efficient real-time processing to enhancing their understanding across different sensor inputs. This is a new research line in the lab, more research outputs are expected.

Research Output: [ECCV’24a],[IROS’24], [ICRA-W’2023]

Robust Spatial Perception for Autonomous Vehicles in the Wild

Autonomous driving, aka. mobile autonomy, promises to radically change the cityscape and save many human lives. A pillar component for achieving mobile autonomy is spatial perception - the ability for vehicles to understand the ambient environment and localize themselves on the road. Thanks to the recent advances in computer vision and solid-state technology, great improvement in spatial perception has been witnessed in controlled environments. Nevertheless, the perception robustness of many systems is still far off the safety requirement of autonomous driving when it comes to the wild condition, such as bad weather, poor illumination, various dynamic objects or even malicious attacks. In the MAPS lab, we study the robust spatial perception problems in full-stack, ranging from the single-modality based methods to multi-sensory fusion, and to the perception uncertainty quantification. More recently, a traction to my lab is unfolding the potential of 4D automotive radar in localization and scene understanding. As an emerging sensor, automotive radars are reputable for their sensing robustness against bad weather and adverse illumination. However, due to their significantly lower data quality, it remains largely unknown how one can transform the radar sensing robustness to vehicle perception effectiveness - a question my team keen to answer.

Research Output: [NeurIPS’2024], [ICRA’2024a], [ICRA’2024b], [CVPR’2023], [IROS’2023], [RA-L’2023], [NDSS’2023], [RA-L/IROS’2022], [IROS’2022a], [SECON’2022], [ICRA’2022a], [ICRA’2022b], [TNNLS’2022], [TNNLS’2021], [AAAI’2020], [CVPR’2019]

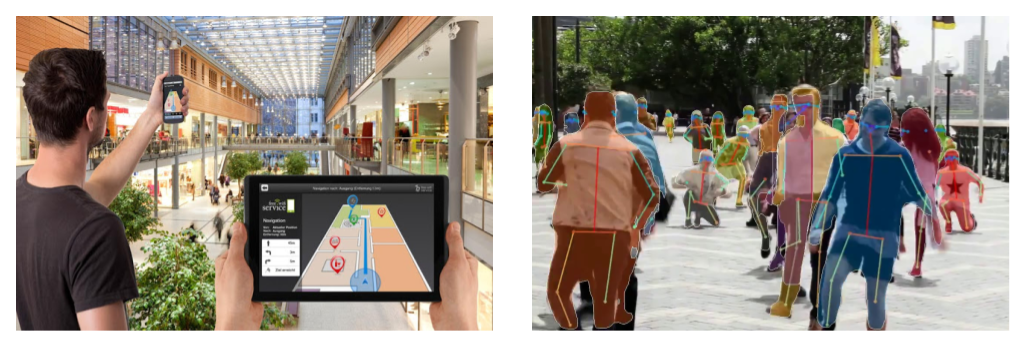

Privacy-aware and Low-Cost Human Motion Sensing

Despite a plethora methods being proposed over recent decades, today’s human sensing systems — primarily those designed to track location and monitor activities — often rely on RGB cameras. These methods encounter difficulties in adverse lighting conditions and raise increasing privacy concerns in domestic environments. Moving away from camera-centric solutions, the challenge intensifies when designing for low-resolution sensors, such as mmWave, WiFi, UWB, BLE, and inertial measurement units. This complexity arises because humans do not naturally interact with these modalities, complicating the application of our everyday experiences to improve algorithms for these sensors. Leveraging the latest advancements in machine learning, we advocate for AI-empowered methods to analyze data from these sensors, extending their utility. Our research also delves into whether these low-cost, low-resolution sensors can be utilized for fine-grained pose estimation, such as gestures and hand movements, beyond mere localization. Importantly, we aim to develop methods that are more acceptable by users, particularly for the aging population, ensuring our solutions are both effective and sensitive to the privacy and usability needs of this demographic.

Research Output: [SenSys’25], [ECCV’24b], [ICRA’2024c], [NeurIPS’2023 ], [Patterns’2023], [UbiComp’2023], [IoT-J’2023], [UbiComp’2022], [IoT-J’2022],[ICCV’2021], [TMC’2021], [TNNLS’2021], [ICRA’2020], [TMC’2019], [AAAI’2019], [DCOSS’2019], [AAAI’2018], [MobiCom’2018], [TWC’2016]

Robust and Rapid Sense Augmentation Support for First Responders (completed)

From the equator to the Arctic, fire disasters are going to happen more often as a result of anthropogenic climate change. This consequently results in more frequent duty calls of firefighters. However, at the present, firefighting is still regarded as one of the most strenuous and dangerous jobs in the world. A fire incident is often accompanied by a variety of airborne obscurants (e.g., smoke and dust) and poor illumination, making firefighters difficult to navigate themselves and understand the fire ground. We aim to design robust yet real-time localization, mapping and scene understanding services that can be directly integrated into the augmented reality wearables and firefighting robots. These support systems will, in turn, enhance firefighters’ operational capacity and safety in visually-degraded conditions and on resource-constrained platforms.

Research Output: [EWSN’2023], [TR-O’2022], [IROS’2022b], [CPS-ER’2022], [ICRA’2021], [SenSys’2020], [MobiSys’2020], [RA-L’2020]

Media Exposure: BBC News, BBC Good Morning Scotland, STV, Planet Radio, Sky News, Evening Standard Tech & Science Daily, Scottish Daily Express, The Independent, Scottish Field, Scottish Daily Mail, Italy 24 News, Irish News, Engineering & Technology, Digit News

Robust Identity Inference across Digital and Physical Worlds (completed)

Key to realizing the vision of human-centred computing is the ability for machines to recognize people, so that spaces and devices can become truly personalized. However, the unpredictability of real-world environments impacts robust recognition, limiting usability. In real conditions, human identification systems have to handle issues such as out-of-set subjects and domain deviations, where conventional supervised learning approaches for training and inference are poorly suited. With the rapid development of Internet of Things (IoT), we advocate a new labelling method that exploits signals of opportunity hidden in heterogeneous IoT data. The key insight is that one sensor modality can leverage the signals measured by other co-located sensor modalities to improve its own labelling performance. If identity associations between heterogeneous sensor data can be discovered, it is possible to automatically label data, leading to more robust human recognition, without manual labelling or enrolment. On the other side of the coin, we also study the privacy implication for such cross-modal identity association.

Research Output: [WWW’2020], [WWW’2019], [IoT-J’2019], [UbiComp’2018], [ISWC’2018], [IPSN’2017]